Communication (originating from the Latin word communis, meaning to share) is an essential part of what defines us as human beings. In all societies, people use different languages to communicate. Language is the medium that we use to share emotions, experiences, and affections. Throughout history, different regions at different times used different languages. Yet to every civilization, there has existed a lingua franca, a common language that enables people of different mother tongues to communicate.

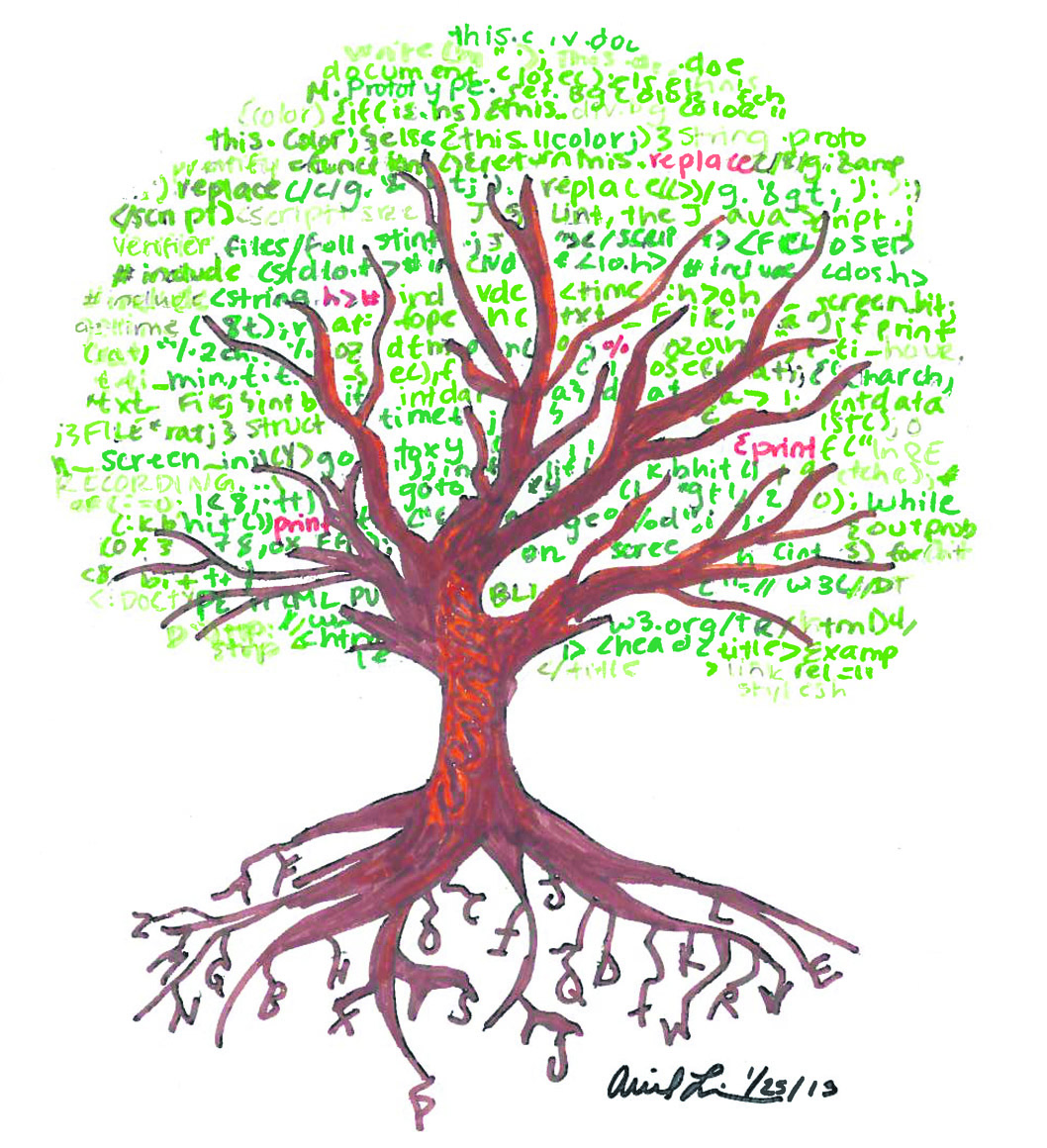

In today’s technology-driven world, we are in constant communication through computers. Financial markets can move at a click of a mouse and I can Skype with my parents before going to sleep. Computer programming uses languages such as Java, C, HTML, and others, all of which have clear roots in the English language.

Origin

The world’s first computer program is credited to an English mathematician by the name of Augusta Ada Byron, aka Ada Lovelace. She worked on Charles Babbage’s mechanical general purpose machine in 1830, the first machine that used an arithmetic logic unit, control flow, and integrated memory. Another English-speaker, American Dr. Grace Hopper, worked on the Bureau of Ordinance’s Computation Project at Harvard (an IBM project). She is credited with developing programs for the first automatically sequenced digital computer, the Mark I. Through the U.S. Navy’s wartime efforts in World War II, this was the first computer operated on a large scale, setting a worldwide precedent.

Rather than dealing with specific memory addresses, stacks, and registers, a high-level programming language focuses on usability, offering a simpler way to program and use the computer system. In 1945, a German civil engineer by the name of Konrad Zuse designed the first high-level programming language, Plankalkül (“Plan Calculus”). Unfortunately, due to the wartime and postwar conditions in Germany, Plankalkül was never published, leaving the door open for another language to become the foundation of high-level programming.

Almost a decade later, IBM created their Mathematical FORmula TRANslation System, abbreviated to FORmula TRANslation, and further reduced to FORTRAN. In 1954, it was initially developed to compute lunar positions and is now regarded as the oldest high-level programming language ever published.

Development of English-based languages

After witnessing the potential of computers during World War II, Europe and North America wanted to employ a universal computing language between the two continents. Various languages were proposed, including IBM’s FORTRAN and Lisp (an American language used in the early development of artificial intelligence for the military). In 1958, however, at a conference in Zurich, both sides agreed to implement ALGOL, short for ALGOrithmic Language, another English-based language developed by a team of American and European scientists, as the universal standard.

An interesting dilemma unfolded between IBM and the committee. IBM had allocated an enormous amount of resources to develop their programming language FORTRAN, and was resistant to forfeiting its investment. They claimed that ALGOL’s user experience was incomplete and required further development if it were to be considered the standard. However, the committee stuck to its decision.

The employment of the ALGOL language was slow to catch on and was initially concentrated in academia. IBM, on the other hand, expanded globally and promoted FORTRAN as the lingua franca of the industrial computing world.

The next big step in programming was real-time computing. In the aftermath of the Cuban missile crisis and during the later years of the Cold War, the U.S. military was especially interested in the idea of real-time computing. At the time, conventional military tactics demanded information sooner rather than later. They wanted a platform that could control all tanks, planes, missiles, et cetera in a synchronized fashion. The military funded Project Ada, consisting of thousands of computer scientists collaborating over the course of several years. Project Ada formed the basis for real-time computing around the world – yet another English-based standard.

In a post-WWII world, American superiority in commerce, technology, and military can be seen as the cornerstone of the English-language dominance of technology. It is evident that universal computer programming standards have been defined through players like American research institutions, private and public companies (such as IBM), and projects funded by the U.S. military. That being said, other countries have, both in the past and present, made pushes of their own.

The Non-English Languages

Obviously, there are linguistic factors that can impact the building of a programming language. For example, one byte (consisting of 8 bits, and able to represent 256 characters) can more than represent all the characters in the English language. The Hanyu Da Zidian Chinese dictionary, as published in 1989, consisted of 54,678 distinct Chinese characters. A Chinese programming language would then require two bytes (representing 65,536 characters), a fundamental difference that would set it apart from an English-based language. Some may question the value of implementing a language requiring so many characters.

That is not to say that non-English-based programming languages do not exist – they do. A quick Google search comes up with many results in many languages. Starting in the 1960s, the Soviet Union made many attempts at developing its own languages. ANALITIK was designed initially domestically at the Institute of Cybernetics in Kiev. Yet many have noticed its resemblance to Western ALGOL-like languages. Research and development efforts in other countries such as Brazil, India, South Korea, and Singapore have also produced very capable and developing software industries. These up-and-coming players will undoubtedly participate on the global stage and may in the future put a dent in the English-dominated industry.

Does it matter?

When the major programming languages are based in English, one wonders if fluency, or if you’re a native speaker, matters. Interviews with members of the McGill community suggest that this has a possible, but variable, effect.

“I think programming code is sufficiently divorced enough from English such that the actual language of the keywords used is irrelevant,” said Bentley Oakes, a second-year Master’s student in computer science.

However, Michael Misiewicz (MSc ’12), a McGill graduate who now works at AppNexus Inc. in New York City, claimed that “… nearly everything to do with computers has been invented or designed in the U.S., mostly everything is in English. I’d say proficiency in written English is a requirement for complex technology work.”

What’s next

Is the world moving away from a lingua franca of English? As with anything, it is difficult to predict, but as long as significantly more scientific research is published in English, and many computer-based industries are based in the West, incentives for the development and use of English-based standardized languages remain.